Therefore, you might use Cross-Validate Model in the initial phase of building and testing your model, to evaluate the goodness of the model parameters (assuming that computation time is tolerable), and then train and evaluate your model using the established parameters with the Train Model and Evaluate Model modules. There are two main ways to use cross-validation.Ĭross-validation can take a long time to run if you use a lot of data. However, because cross-validation trains and validates the model multiple times over a larger dataset, it is much more computationally intensive and takes much longer than validating on a random split.Ĭross-validation evaluates the dataset as well as the model.Ĭross-validation does not simply measure the accuracy of a model, but also gives you some idea of how representative the dataset is and how sensitive the model might be to variations in the data. In contrast, if you validate a model by using data generated from a random split, typically you evaluate the model only on 30% or less of the available data. That is, cross-validation uses the entire training dataset for both training and evaluation, instead of some portion. However, cross-validation offers some advantages:Ĭross-validation measures the performance of the model with the specified parameters in a bigger data space. You should review these metrics to see whether any single fold has particularly high or low accuracyĪ different, and very common way of evaluating a model is to divide the data into a training and test set using Split Data, and then validate the model on the training data. When the building and evaluation process is complete for all folds, Cross-Validate Model generates a set of performance metrics and scored results for all the data. Different statistics are used to evaluate classification models vs. Which statistics are used depends on the type of model that you are evaluating.

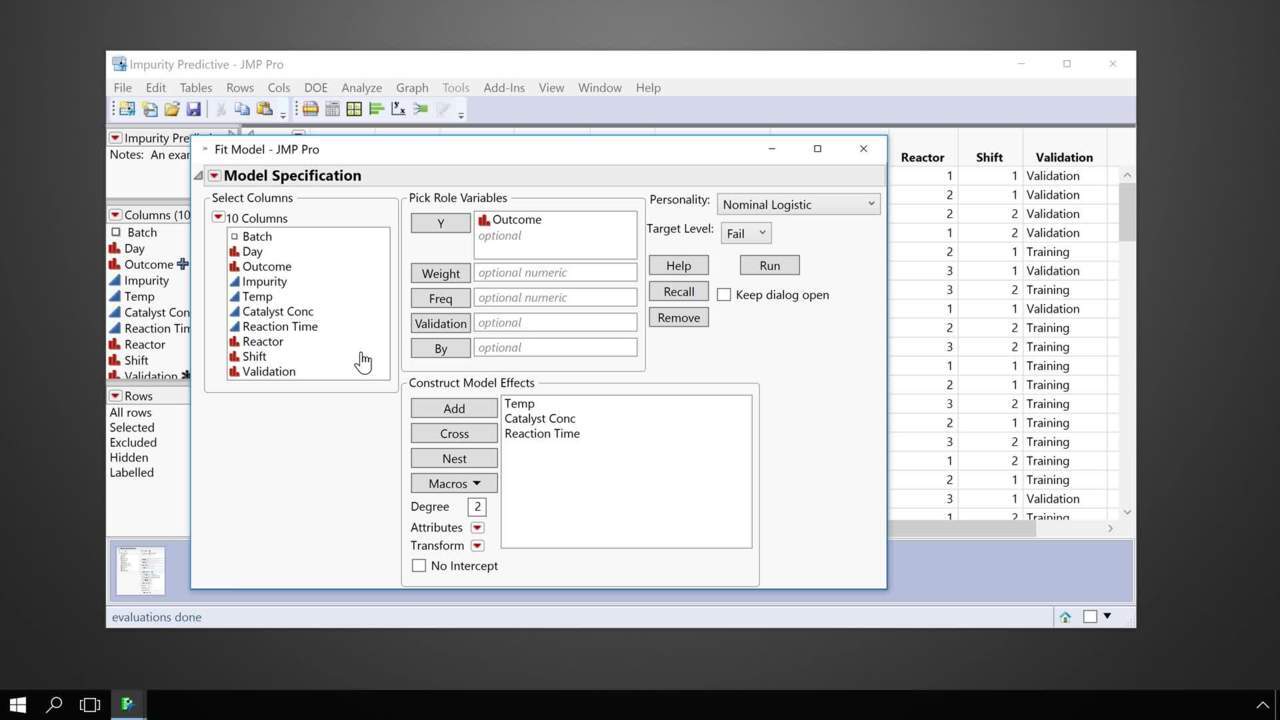

The module sets aside the data in fold 1 to use for validation (this is sometimes called the holdout fold), and uses the remaining folds to train a model.įor example, if you create five folds, the module would generate five models during cross-validation, each model trained using 4/5 of the data, and tested on the remaining 1/5.ĭuring testing of the model for each fold, multiple accuracy statistics are evaluated. To divide the dataset into a different number of folds, you can use the Partition and Sample module and indicate how many folds to use.The algorithm defaults to 10 folds if you have not previously partitioned the dataset.How cross-validation worksĬross validation randomly divides the training data into a number of partitions, also called folds. By comparing the accuracy statistics for all the folds, you can interpret the quality of the data set and understand whether the model is susceptible to variations in the data.Ĭross-validate also returns predicted results and probabilities for the dataset, so that you can assess the reliability of the predictions.

It divides the dataset into some number of subsets ( folds), builds a model on each fold, and then returns a set of accuracy statistics for each fold. The Cross-Validate Model module takes as input a labeled dataset, together with an untrained classification or regression model. Cross-validation is an important technique often used in machine learning to assess both the variability of a dataset and the reliability of any model trained using that data.

#SAS JMP MODEL CROSS VALIDATION HOW TO#

This article describes how to use the Cross-Validate Model module in Machine Learning Studio (classic). Similar drag-and-drop modules are available in Azure Machine Learning designer.

Applies to: Machine Learning Studio (classic) only